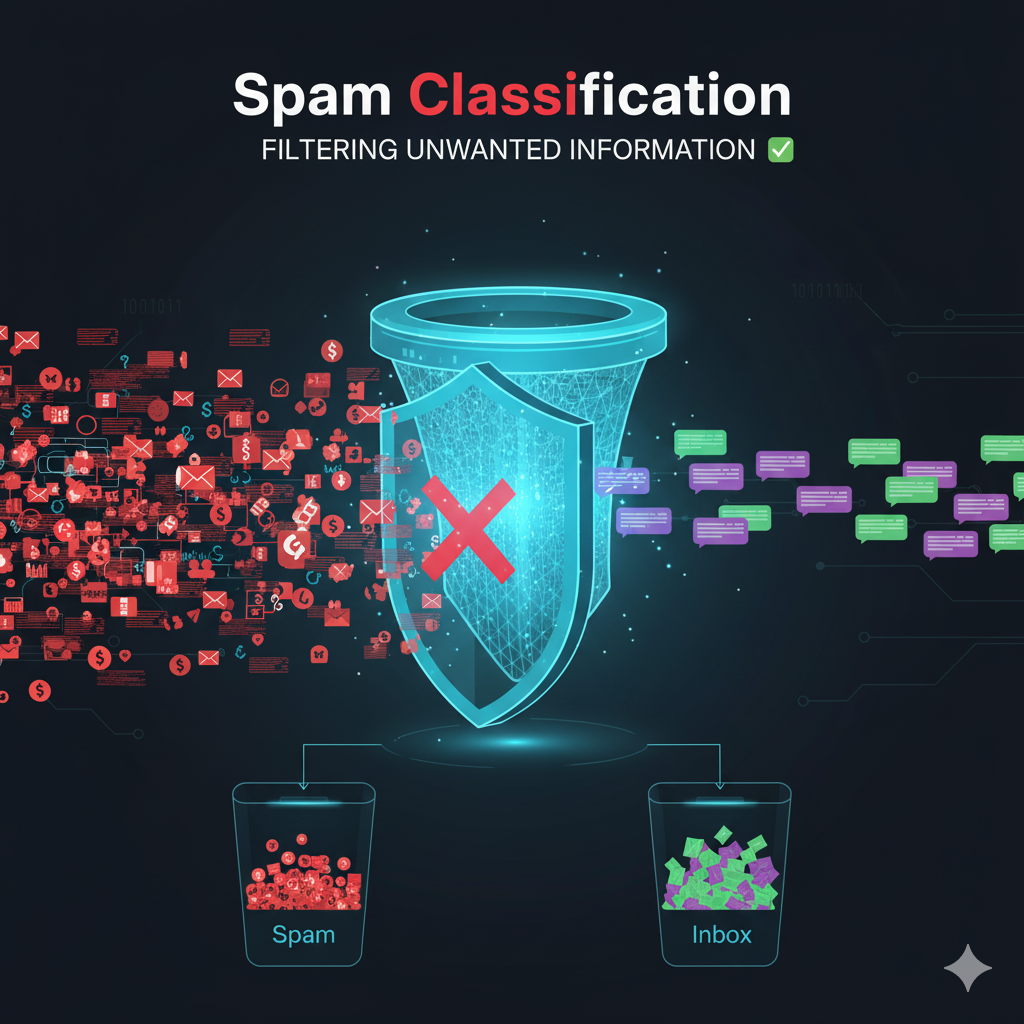

Spam Classification

Spam Classification

A concise, code-free overview of how spam classification works in practice: the problem framing, data, features, models, evaluation, and production concerns. Use this as the conceptual backbone for any implementation.

What Is Spam Classification?

Goal: Automatically label incoming content (e.g., emails, SMS, social posts, form submissions, reviews) as spam or not spam.

Framing:

- Binary classification (spam vs. ham) or multi-label (e.g., promotional, phishing, malware, scams).

- Granularity: message-level, sender-level, campaign-level.

- Constraints: latency (near-real-time), evolving adversaries, privacy/security rules.

Data & Labeling

Sources: historical inboxes, spam traps, abuse reports, honeypots, public corpora (e.g., SMS spam datasets), synthetic data for edge cases.

Labeling strategies:

- Manual annotation (experts, crowd) with clear guidelines.

- Heuristic/weak supervision: rules (e.g., URL shorteners + certain terms), blocklists/allowlists, reputation signals.

- Programmatic labeling & aggregation: multiple weak signals combined with label models.

- Active learning: prioritize uncertain/novel examples for review.

- Continual labeling: to track concept drift (adversaries adapt).

Data hygiene: deduplicate near-identical messages, remove exact duplicates across train/test, detect template campaigns, and guard against data leakage (e.g., future reputation info in training).

Preprocessing

- Normalization: lowercasing (with care for languages), unicode normalization, whitespace collapse.

- Tokenization: word/character/subword; preserve structure like URLs, emails, phone numbers, emojis.

- Redaction/Hashing: personally identifiable information (PII) and secrets.

- Language detection: route to language-specific models or pipelines.

- HTML handling: strip tags while keeping signals (links, forms, hidden text).

- Attachment/meta cues: presence, type, size (without exposing contents unless permitted).

Features (Signals)

Content Signals

- Lexical: unigrams/bigrams/char-grams, TF-IDF, misspellings/obfuscations (e.g., “fr€e”, “v1agra”).

- Semantic: word embeddings, sentence embeddings, contextual embeddings (transformers).

- Structure & formatting: excessive punctuation, ALL-CAPS, long token runs, hidden text.

- URL & domain features: number of links, TLD reputation, URL length, shorteners, homograph look-alikes.

- Entity patterns: phone numbers, crypto addresses, affiliate codes, tracking parameters.

- Language style: perplexity vs. typical language, sudden shifts in tone/register.

Metadata & Behavioral Signals

- Sender reputation: domain/IP age, DNS (SPF/DKIM/DMARC) status, historical complaint rates.

- Delivery context: time of day, burstiness, list/unsubscribe headers.

- User interactions: open/click/mark-as-spam rates (use only if ethically and legally permissible).

- Network/graph: shared infrastructure between senders, campaign clustering.

Combine content features and metadata for robustness; content alone is vulnerable to obfuscation.

Model Families

- Linear models: Logistic Regression, Linear SVM — strong with TF-IDF n-grams; fast, interpretable.

- Tree ensembles: Random Forests, Gradient Boosted Trees (XGBoost/LightGBM/CatBoost) — handle heterogeneous signals and interactions.

- Naive Bayes: simple baseline, surprisingly competitive on sparse text.

- Neural networks: CNN/RNN for character/word sequences; Transformers (e.g., BERT-like) for contextual understanding.

- Hybrid stacks: transformer embeddings → linear/GBDT head; or rules + ML (two-tier).

Practical guidance: start with a linear or GBDT baseline + strong features; add transformers for subtle semantics and multilingual robustness.

Class Imbalance & Thresholding

- Imbalance: spam may be rare or dominant depending on source. Use class weights, focal loss, or resampling (with caution).

- Thresholds: tune decision thresholds for business objectives (e.g., minimize false positives that block legitimate messages).

- Cost-sensitive risk: define asymmetric costs (FP › FN or vice versa) and optimize accordingly.

Evaluation

Offline metrics:

- Precision, Recall, F1 (macro/micro as appropriate).

- ROC-AUC and PR-AUC (PR-AUC is more informative with imbalance).

- Expected cost under your cost matrix.

- Calibration (Brier score, reliability plots) for well-behaved probabilities.

- Slice analysis: language, region, sender types, message length, URL count — catch localized failures.

- Robustness tests: adversarial obfuscations, template mutations, URL variants.

Validation protocol:

- Temporal splits (train on older, validate on newer) to mimic future deployment.

- Campaign-aware splits: keep near-duplicates in the same fold to avoid leakage.

- Cross-validation with care for time and clusters.

Online validation:

- Shadow/holdout routing or A/B tests with guardrails; start with conservative thresholds.

Adversarial & Drift Considerations

- Obfuscation: leetspeak, spacing/punctuation noise, image-only text, embedded content.

- Template churn: small edits to bypass fingerprints.

- Domain churn: disposable domains, fast-flux IPs, compromised accounts.

- Model exploitation: probing thresholds with exploratory traffic.

- Concept drift: seasonal campaigns (tax, shopping), emergent scams (crypto, pandemic themes).

Countermeasures:

- Character-level and subword modeling; fuzzy matching; URL decomposition; frequent model refresh; continuous black/graylist updates (where compliant); anomaly detection.

Human-in-the-Loop

- Review queues: uncertain predictions (near threshold) and novel clusters.

- Active learning: uncertainty sampling, diversity sampling, and error-focused sampling.

- Feedback loops: user reports balanced with abuse of the report function; quality control on labels.

Deployment Architecture (Conceptual)

- Multi-stage pipeline:

- Fast rules/reputation filter (cheap, high-precision).

- ML classifier (content + metadata).

- Heavier semantic model on a small subset (low-latency budget).

- Caching & reputation stores: sender/domain/IP features updated continuously.

- Explainability: feature importances, exemplar messages, policy rationale for appeals.

- Latency budget: optimize feature extraction and model size; batch non-critical signals.

Monitoring & Maintenance

- Drift & health: input feature stats, score distributions, label rates, calibration.

- Performance: precision/recall over time; per-slice metrics; false-positive review rate.

- Security: model fingerprinting, abuse monitoring, tamper detection.

- Retraining cadence: event-driven (performance drop, drift alert) + scheduled refresh.

- Versioning: datasets, features, and models are all versioned; reproducible training.

Ethics, Safety, and Compliance

- False positive harm: lost messages, business impact; provide appeal/override paths.

- Fairness: evaluate across demographics, languages, geographies; avoid proxy bias.

- Privacy: minimize PII usage; differential access controls; data retention limits.

- Transparency: user-visible reasons/categories where appropriate; clear policies.

- Legal/regulatory: CAN-SPAM, GDPR/CCPA, ePrivacy, local telecom rules for SMS.

Common Pitfalls

- Leakage: including future feedback, deduped near-duplicates across splits.

- Overfitting to keywords: brittle against obfuscation.

- Ignoring non-English traffic: sudden failure when markets expand.

- Static thresholds: failing to retune as base rates shift.

- Uncalibrated scores: poor downstream decisioning and triage.

Minimal Baselines (Conceptual)

- Rule-only baseline: block obvious patterns; measure precision.

- TF-IDF + Logistic Regression: strong, fast, interpretable text baseline.

- Transformer embeddings + linear head: improved semantics with manageable latency.

Use these to set expectations and detect regressions when iterating.